CHiME-8 NOTSOFAR Challenge Prize Winners

A collaboration between researchers from the Brno University of Technology (BUT) and researchers at the HLTCOE was awarded the prize for the most efficient, novel, or practical submission to task-2, NOTSOFAR, of the 8th edition of the CHiME challenge. Unlike other approaches, which rely on large ensembling methods, training on large amounts of synthetically overlapped speech, or are reliant on multiple microphones for beam forming, the challenge submission proposed a simple means of repurposing existing ASR systems, such as Whisper, to perform target-speaker ASR by conditioning on the outputs of a diarization system. The approach was presented at the 2024 CHiME workshop, which was a satellite event of Interspeech 2024 in Kos, Greece. Read more about the submission here.

HLTCOE and JHU Researchers’ Novel Approach to Multilingual Language Models Featured in the Johns Hopkins Hub

An article in the Johns Hopkins Hub highlights HLTCOE and CLSP collaborators’ research on multilingual language models (MLMs).

The research team, which includes Haoran Xu, Philipp Koehn, Kenton Murray, and Benjamin Van Durme, has developed a novel approach to optimizing MLMs for multiple languages. They recently presented their work at the 2023 Conference on Empirical Methods in Natural Language Processing (EMNLP).

Their method, called Language-Specific Matrix Synthesis, employs low-rank matrices to reduce the number of parameters needed for a model to function in each new language. In tests with a model capable of understanding up to 95 different languages, the team has shown that their method allows for a significant reduction in a language model’s size without compromising its performance.

The researchers’ objective is to apply their method to unwieldy MLMs and develop robust AI systems that can comprehend multiple languages while performing as effectively as they do in English. By reducing the size and hardware constraints of MLMs, Language-Specific Matrix Synthesis may also make it possible to deploy multilingual AI models that can handle hundreds of languages in devices of all sizes.

Whiting School Promotes No Language Left Behind initiative at Center for Language and Speech Processing

The Whiting School of Engineering recently published an https://engineering.jhu.edu/magazine/2023/06/no-language-left-behind/

in-depth piece on the No Language Left Behind initiative at the HLTCOE’s sister center, the Center for Language and Speech Processing. The article contains interviews with CLSP Director Sanjeev Khudanpur, HLTCOE Research Scientist Matthew Wiesner, and a number of other HLTCOE affiliates.’

CLSP and HLTCOE Researchers win Outstanding Paper Award at EACL 2023

A team of researchers from the HLTCOE and the Center for Language and Speech Processing were named winners of an Outstanding Paper award at the 2023 European Association for Computational Linguistics conference. The paper was entitled ‘Iterative Document-level Information Extraction via Imitation Learning,’ and was written by a team of researchers led by first author Yunmo Chen. The full text of the paper is available here (link to https://aclanthology.org/2023.eacl-main.136.pdf ). The EACL conference was held in Dubrovnik, Croatia.”

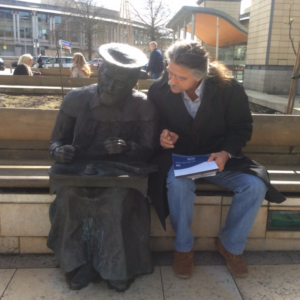

HLTCOE Principal Investigator Carey Priebe named Visiting Scholar at Oxford and Cambridge Universities

HLTCOE’s Principal Investigator, Dr. Carey Priebe of the Applied Mathematics and Statistics Department, has been named a visiting Scholar of Pembroke College, Oxford University, and a Visiting Fellow of Trinity College, Cambridge University. Dr. Priebe is spending part of his 2023 sabbatical at the renowned MRC Laboratory of Molecular Biology where he is working on comparative connectomics with the Marta Zlatic and Albert Cardona Labs.